Step through a web scraping pipeline from start to finish.Use requests and Beautiful Soup for scraping and parsing data from the Web.Inspect the HTML structure of your target site with your browser’s developer tools.If you like to learn with hands-on examples and have a basic understanding of Python and HTML, then this tutorial is for you. The Python libraries requests and Beautiful Soup are powerful tools for the job. To effectively harvest that data, you’ll need to become skilled at web scraping. The incredible amount of data on the Internet is a rich resource for any field of research or personal interest. Watch it together with the written tutorial to deepen your understanding: Web Scraping With Beautiful Soup and Python

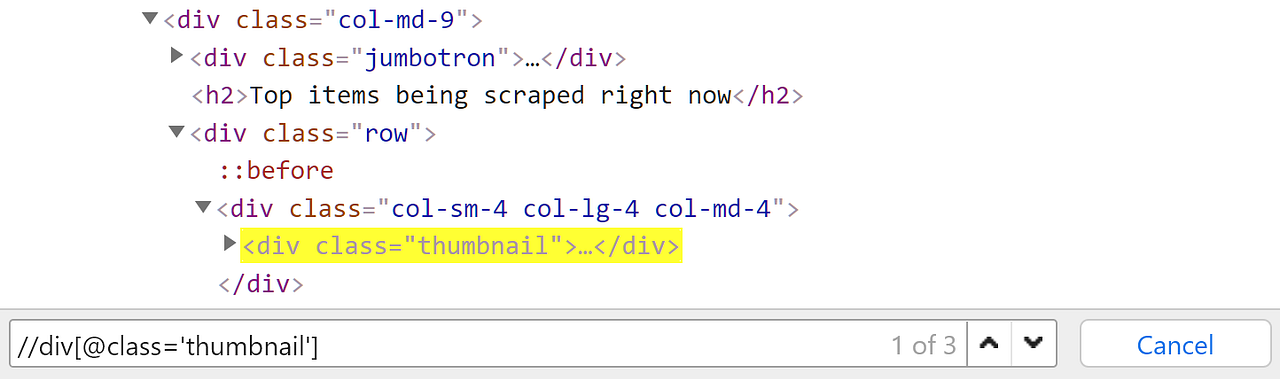

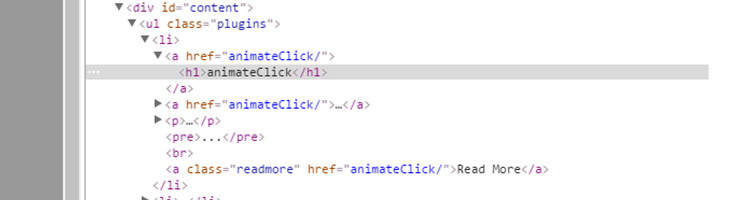

chromium.Watch Now This tutorial has a related video course created by the Real Python team. We can see that the nav element we are interested in is suspended in the tree in the following hierarchy html > body > div > header > navĬonst browser = await playwright. Observe that we want to scrape the nav element in the DOM. Let’s say we are trying to grab all the navigation links from StackOverflow blog. The best way to explain this is to demonstrate this with a comprehensive example. ☝️ You can learn more about this in our XPath for web scraping article. What is an XPath Expression? XPath Expression is a defined pattern that is used to select a set of nodes in the DOM. Querying with XPath expression selectorsĪnother simple yet powerful feature of Playwright is its ability to target and query DOM elements with XPath expressions. The x and y coordinates starts from the top left corner of the screen. The first step is to create a new Node.js project and installing the Playwright library.Ĭonst playwright = require( 'playwright') Ĭonst browser = await playwright.

We will write a web scraper that scrapes financial data using Playwright. The best way to learn something is by building something useful. Headless browsers solve this problem by executing the Javascript code, just like your regular desktop browser. If you scrape one of those websites with a regular HTTP client like Axios, you would get an empty HTML page since it's built by the front-end Javascript code. The main reason why headless browsers are used for web scraping is that more and more websites are built using Single Page Application frameworks (SPA) like React.js, Vue.js, Angular. This comes in handy when scraping data from several web pages at once.

Since headless browsers require fewer resources we can spawn many instances of it simultaneously. The obvious benefits of not having a user interface is less resource requirement and the ability to easily run it on a server. Well, a headless is a browser without a user interface. What are headless browsers and why they are used for web scraping?īefore we even get into Playwright let’s take a step back and explore what is a headless browser.